AI & APIs

Artificial intelligence technologies and concepts relevant to API documentation. This section covers AI tools, terminology, and practices that impact how technical writers create and enhance API documentation.

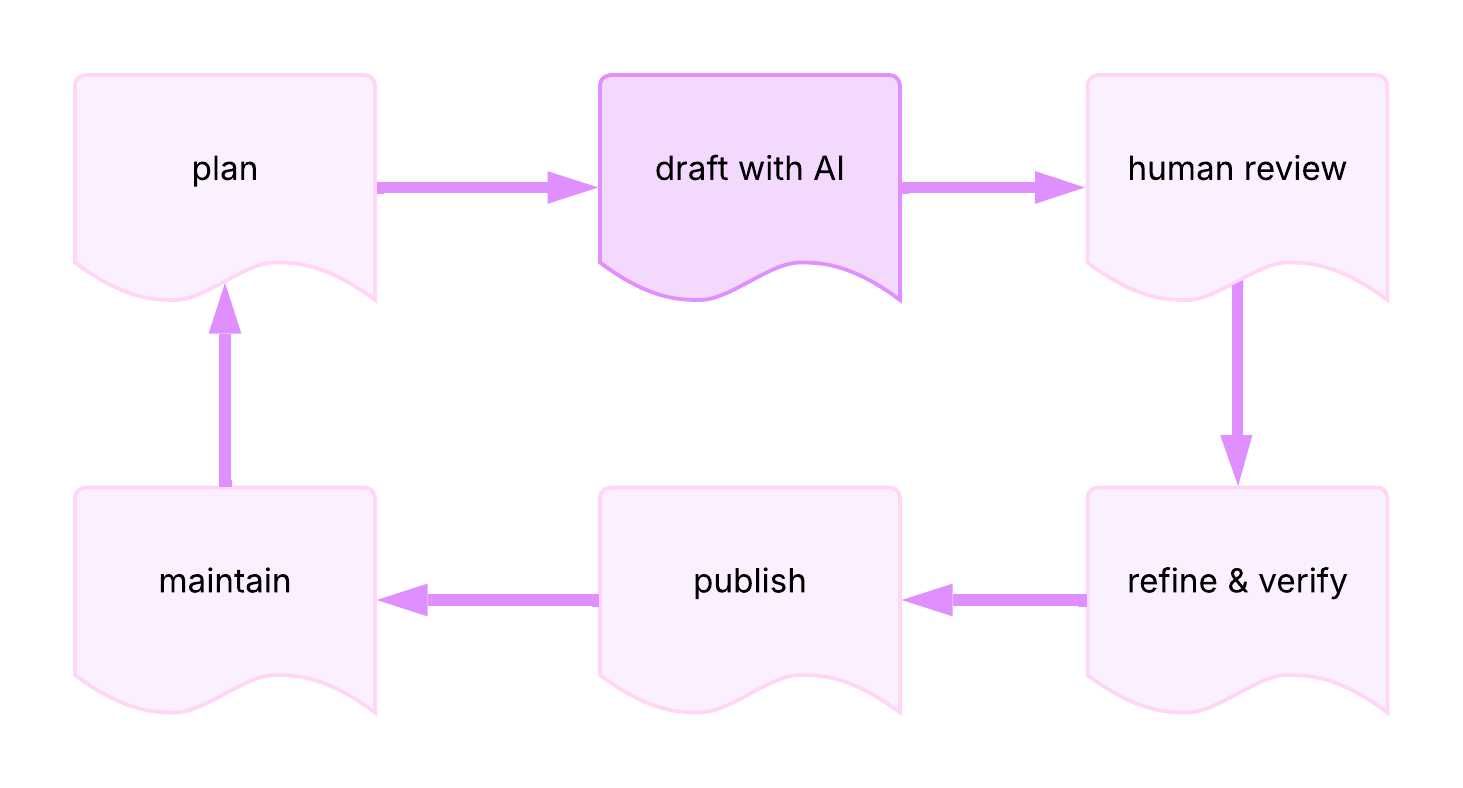

AI-assisted documentation workflow:

agent configuration

Definition: structured documentation files that define how AI agents behave within a project, including workflow rules, role specifications, coordination patterns, task procedures, and acceptance criteria; commonly written in Markdown

Purpose: enables tech writers to govern AI agent behavior using docs skills they already possess — progressive disclosure, audience analysis, IA, and editorial judgment — applied to an AI audience rather than a human one

5 agent config doc types

| Doc Type | Purpose | Docs Equivalent |

|---|---|---|

| Project Descriptions | Project-level rules and conventions loaded into every agent conversation - AGENTS.md, CLAUDE.md; most effective when minimal — research shows verbose files reduce task success rates | Style guides, contributing guidelines |

| Agent Definitions | An agent's identity, capabilities, constraints, and escalation rules | Job descriptions, reviewer guidelines |

| Orchestration Patterns | How multiple agents hand off work, including quality gates and routing decisions | Workflow diagrams, RACI charts |

| Skills | Task-level procedures defining what needs to happen, what inputs are required, and what outputs are expected | SOPs, how-to guides |

| Plans and Specs | Requirements specifications that define what "done" looks like before work begins | Content briefs, acceptance criteria |

Key Research Findings: agents with AGENTS.md files completed tasks

29% faster and used 17% fewer tokens than agents without them; however,

LLM-generated configuration files increased cost by 20% while reducing

task success — human-curated, minimal files outperform verbose or

auto-generated ones

Related Terms: AI agent, information architecture, Large Language Model, Markdown, orchestration, progressive disclosure, prompt engineering

Sources:

- arXiv: 2601.20404 - Lulla et al., "On the Impact of AGENTS.md Files on the Efficiency of AI Coding Agents"

- arXiv: 2602.11988 - Gloaguen et al., "Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?"

- Dachary Carey: Agent-Friendly Documentation Spec

- Instruction Manuel: "The Most Actionable Docs Around: Agent Configs" by Manny Silva

- Vercel Blog: "AGENTS.md outperforms skills in our agent evals" by Jude Gao

AI

Definition: acronym for Artificial Intelligence; technologies that use computers and large datasets to perform tasks, make predictions, or solve problems that typically require human intelligence

Purpose: encompasses tools and techniques increasingly used in API documentation workflows, from content generation to automated testing

Related Terms: AI agent, genAI, Large Language Model, Machine Learning, Natural Language Processing, prompt engineering

Sources:

- University of Washington: "AI + Teaching"

- UW API Docs: Module 1, Lesson 4, "Intro to AI and API docs"

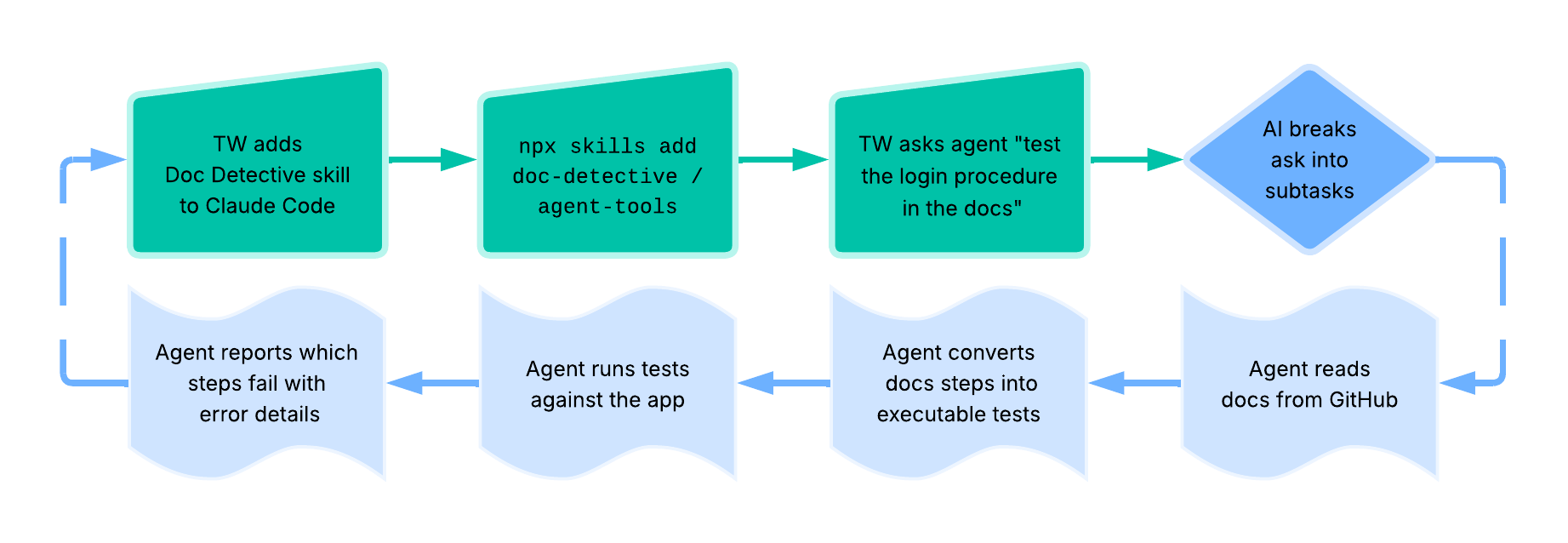

AI agent

Definition: single implementation and/or instance of an autonomous system that independently plans, uses tools, and takes actions to accomplish tasks on behalf of users; combines NLP - Natural Language Processing - with reasoning capabilities to break down complex goals into steps, gather information through external tools, and adapt its approach based on observations

Purpose: enables automation of complex, multi-step workflows that previously required human decision-making; particularly valuable in API documentation contexts for automating docs testing, generating code examples, maintaining accuracy across docs updates, and integrating with external systems through APIs and tools

How AI Agents Work: agents operate through a cycle of reasoning about the task, deciding which actions or tools to use, observing results from those actions, and adapting their plan accordingly; they maintain memory of past interactions, can call external APIs or tools, and continue iterating until the goal is accomplished or they determine they cannot proceed

agentic AI vs AI agent skills

Also known as compound AI systems, agentic AI refers to the broader field and architectural pattern of building autonomous AI systems; similar to how "a microservice" is an instance while "microservices architecture" is the pattern.

AI agent skills refer to modular folders of instructions, scripts, and resources that AI agents can discover and load on demand. Instead of hardcoding knowledge into prompts or creating specialized tools for every task, skills provide a flexible way to extend agent capabilities.

Skills are prose — Markdown files with frontmatter — not compiled code; they have the same authoring, maintenance, and quality requirements as any other docs, and the same failure modes when those requirements aren't met. Skills generated by AI without human editorial oversight introduce information decay: iterative LLM processing degrades factual content measurably across revision chains, producing outputs that read well but quietly diverge from ground truth. Tech writers are well-positioned to co-own skills as a docs channel — reviewing for accuracy, ensuring consistency with existing docs, and building evals or skill validators into CI pipelines to catch drift before it compounds.

Note: agentic AI is an emerging technology; humans must build sophisticated mental models to enable agents to develop the correct intuition to make effective, positive changes in software systems

Example: tech writer → Docs Detective → Claude Code skills workflow -

Related Terms: agent configuration, AI, API documentation testing, docs-as-tests, eval, Large Language Model, Markdown, MCP server, metaprogramming, Natural Language Processing, orchestration, prompt engineering, RAG

Sources:

- IBM: "What Are AI Agents?" by Anna Gutowska

- Stripe: "Minions: Stripe's one-shot, end-to-end coding agents" by Alistair Gray

- GitHub: doc-detective/agent-tools

- Google Cloud: "What is agentic AI?"

- passo.uno: "Skills are docs, and docs need tech writers" by Fabrizio Ferri Benedetti

- Spring by VMware Tanzu: "Spring AI Agentic Patterns (Part 1): Agent Skills - Modular, Reusable Capabilities" by Christian Tzolov

- Wikipedia: "AI agent"

AI-assisted documentation

Definition: documentation created or enhanced using AI tools while maintaining human oversight for accuracy, technical correctness, and quality

Purpose: accelerates documentation workflows by handling repetitive tasks, allowing technical writers to focus on complex explanations, accuracy verification, and user experience

Example: using AI to generate initial drafts of API reference descriptions, then manually reviewing and enhancing with technical details and examples

Related Terms: AI, Clayton's Docs, docs theater, genAI, GitBook, Large Language Model, Mintlify

Source: UW API Docs: Module 1, Lesson 4, "Intro to AI and API docs"

AI-assisted usability analysis

Definition: use of AI tools to analyze usability test results and identify patterns in user behavior or interface issues

Purpose: accelerates analysis of certain types of usability data while recognizing the limitations of AI in evaluating human factors

Appropriate use cases:

- Mechanical tests: language clarity, navigation patterns, consistency checks

- Pattern identification: recurring user errors, common interaction sequences

- Quantitative analysis: time-on-task, completion rates, click paths

Limitations:

- Can't reliably assess human factors: credibility, perception, satisfaction, emotional responses

- AI capabilities and best practices evolve rapidly, requiring ongoing evaluation

- Results should supplement, not replace, human expertise in usability research

- Interpretation quality depends on the specific AI tools and prompts used

Note: this represents current perspectives on AI implementation into usability testing strategies and may evolve as AI capabilities develop

Related Terms: AI, guerrilla usability testing, usability testing

Source: UW API Docs: Module 4, Lesson 3, "Review usability testing for API"

AI bias

Definition: systematic errors or unfair outcomes in AI systems that reflect prejudices present in training data or model design

Purpose: awareness of AI bias ensures documentation teams critically assess AI-generated content rather than accepting it as authoritative, particularly for examples involving people, places, or cultural contexts

Related Terms: AI, training data

Sources:

- University of Washington: "AI + Teaching"

- UW API Docs: Module 1, Lesson 4, "Intro to AI and API docs"

eval

Definition: abbreviation for "evaluation"; automated test for an AI system that measures whether the system's output meets defined success criteria for a given input

Purpose: enables API docs teams to validate AI agent behavior before deployment, catch regressions when models or prompts change, and define measurable success criteria that replace guesswork; evals compound in value over an agent's lifecycle — early evals force teams to specify what "done" looks like, while later evals protect against quality drift

Why this belongs in AI & APIs: describes a concept specific to AI

system development and evaluation; while testing broadly belongs in

Workflows & Methodologies, evals are a distinct AI-specific practice with

their own grader types, metrics, and infrastructure that differ meaningfully

from traditional software testing — the term appears primarily in AI engineering

contexts and addresses challenges unique to non-deterministic model behavior

eval vs API docs testing

| eval | API docs testing | |

|---|---|---|

| Validates | AI system output meets defined criteria | Documented procedures work as written |

| Nature | Probabilistic | Deterministic |

| Measures | Model behavior | Content accuracy |

| Graded By | Criteria-driven rubrics, LLM judges, human review | Pass/fail test execution |

| Used When | Testing the AI layer of a docs workflow | Verifying the outputs that layer produces |

Example: a docs team using an AI agent to generate release notes builds an eval suite with three grader types — a deterministic style guide check using Vale, an LLM-as-judge rubric scoring accuracy and tone, and periodic human review to calibrate the LLM grader; when the team upgrades to a new model, the eval suite runs automatically and reveals that the new model over-summarizes breaking changes, giving the team a concrete regression to fix before shipping

Related Terms: AI agent, deterministic testing, docs-as-tests, probabilistic testing

Sources:

- Anthropic PBC, Engineering at Anthropic: "Demystifying evals for AI agents"

- I'd Rather Be Writing: "Podcast: Doc testing, skills files, and the guardians of knowledge – with Manny Silva" by Tom Johnson, Fabrizio Ferri-Benedetti

genAI

Definition: acronym for Generative AI; AI systems that create new content by identifying patterns in training data and using probability to generate text, images, or other media

Purpose: assists API documentation writers with drafting, editing, and formatting tasks while requiring human oversight for accuracy and quality

Example: using Claude or ChatGPT to draft initial API endpoint descriptions that writers then refine and verify

Related Terms: AI, Large Language Model, Machine Learning, prompt engineering

Sources:

- University of Washington: "AI + Teaching"

- UW API Docs: Module 1, Lesson 4, "Intro to AI and API docs"

knowledge graph

Definition: also known as a KG; structured representation of knowledge using entities - concepts, objects, events - and the relationships between them, organized in a graph format that both humans and machines can query and reason about

Purpose: enables semantic understanding and intelligent querying of API documentation by representing relationships between endpoints, parameters, authentication methods, error codes, and other API concepts; supports AI-powered documentation search, chatbots, and automated content assembly

Example: a KG enables AI to traverse relationships to

answer development questions such as "What authentication does the payment

endpoint need?" - instead of relying on keyboard matching in documentation

text, the KG reveals that the payment endpoint is protected by

"OAuth 2.0", which requires client credentials -

client_id, client_secret, and scope - and generates a bearer token

used in requests

Interactive KG Explorer

OAuth 2.0

Related Terms: content, diagram, information architecture, liquid content, modular content, ontology, RAG, structured content

Sources:

Large Language Model

Definition: also known as an LLM; form of genAI trained on large amounts of text that generates human-like responses using deep learning and neural networks

Purpose: handles repetitive or foundational documentation tasks such as generating boilerplate descriptions, summarizing content, or translating text

Example: LLMs can draft initial OpenAPI specification descriptions or generate code examples in many programming languages

Related Terms: agent configuration, AI, AI agent, genAI, liquid content, metaprogramming, Natural Language Processing, orchestration, prompt engineering, RAG

Sources:

- University of Washington: "AI + Teaching"

- UW API Docs: Module 1, Lesson 4, "Intro to AI and API docs"

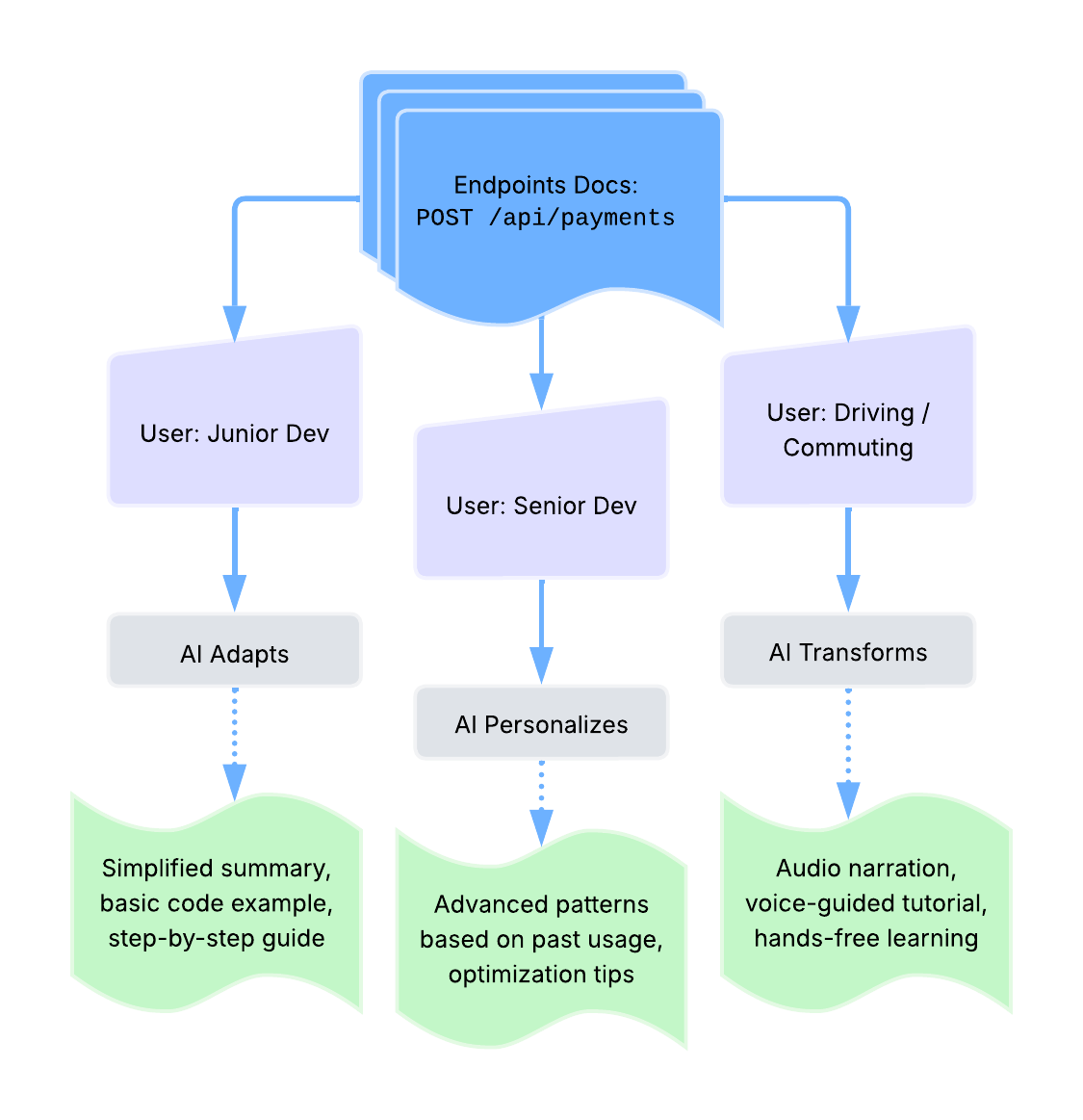

liquid content

Definition: content strategy that uses AI to transform and personalize kinetic content; content that adapts in real-time based on user context, preferences, or behavior, typically powered by LLMs to transform between formats - text, audio, video, summaries - while maintaining accuracy

Purpose: delivers personalized documentation experiences and enables content to flow across different formats and interfaces based on user needs; represents the AI-powered implementation of kinetic content principles where LLMs actively reshape content for different consumption modes

Why this belongs in AI & APIs: liquid content is directly tied to AI

capabilities, has specific emerging use cases in publishing, and sits at

the technical intersection of AI and content delivery, while other content

strategies may or may not be AI-assisted

Example: liquid content transformation paths -

Related Terms: content, kinetic content, Large Language Model, modular content, real-time, structured content

Sources:

- Digiday Media: "WTF is liquid content?" by Sara Guaglione

- Reuters Institute: "Journalism, Media and Technology Trends and Predictions 2026" by Nic Newman

- Story Needle: "Are LLMs making content 'liquid'?" by Michael Andrews

Machine Learning

Definition: practice of using algorithms and large datasets to train computers to recognize patterns and apply learned patterns to complete new tasks

Purpose: enables AI tools to improve API documentation through pattern recognition in existing documentation, automated categorization, and predictive suggestions

Related Terms: AI, genAI, Natural Language Processing

Sources:

- University of Washington: "AI + Teaching"

- UW API Docs - Module 1, Lesson 4, "Intro to AI and API docs"

MCP server

Definition: acronym for Model Context Protocol server; a server implementation that enables AI assistants to programmatically access external tools, data sources, and services through a standardized protocol; acts as a bridge between AI models and the resources they need to complete tasks

Purpose: provides a standardized way for AI assistants to interact with tools and data that exist outside their training data; enables technical writers to build workflows where AI can access internal documentation systems, retrieve files from cloud storage, search knowledge bases, or execute commands—all through a consistent API-like interface; particularly relevant for documentation teams looking to integrate AI assistance into their existing toolchains without rebuilding infrastructure

How MCP Servers Work:

- MCP servers expose resources like files, database records, or API endpoints, and tools like search functions, file operations, or commands, through a JSON-RPC interface

- AI assistants connect to MCP servers as clients, sending requests to access resources or invoke tools

- the protocol handles authentication, authorization, and data exchange between the AI and external systems

- multiple MCP servers can run simultaneously, each providing access to different tools or data sources

Example: updating an API changelog using MCP servers

Common Use Cases for Tech Writers:

- searching internal documentation systems - Confluence, SharePoint, Google Drive

- fetching API specifications from version control - GitHub, GitLab

- accessing customer support data to identify documentation gaps

- automating repetitive documentation tasks across multiple platforms

- integrating AI assistance with existing docs-as-code workflows

Related Terms: AI agent, authentication, JSON, JSON-RPC, metaprogramming, orchestration, REST API, webhook API

Sources:

- GitHub Repository, Anthropic: "Model Context Protocol servers"

- LF Projects, LLC.: "What is the Model Context Protocol (MCP)?"

metaprogramming

Definition: "writing code that writes code" - programming technique where code reads, generates, analyzes, or transforms other programs as data; API docs contexts encompass writing LLM skills, automation scripts, agentic workflows, and prompt instructions that generate or maintain docs

Purpose: enables tech writers to automate repetitive documentation tasks — release note generation, table updates, endpoint validation — and build reusable instructions that shape how LLMs produce, transform, and surface docs; shifts writer effort from execution to architecture and context curation

Historical Context: has roots in 1970-1980s list-processing languages, particularly Lisp, where the ability to treat code as data enabled early AI applications on specialized Lisp machine hardware; the paradigm re-emerges in LLM tooling with a significant twist — prompt engineering can be understood as pseudocode that gained execution capability; like pseudocode, prompts describe intent in natural language rather than formal syntax, but unlike pseudocode, they are interpreted and acted upon directly by a model; when those instructions shape how a model generates other content, prompt engineering becomes metaprogramming — the distinction between building a system and explaining it collapses

Why this belongs in AI & APIs: describes a programming paradigm that has

taken on new relevance for tech writers specifically through LLM tooling — writing

Claude Skills, Cursor Rules,

Copilot Agent Skills,

and agentic CI/CD pipelines are applied forms of metaprogramming; the traditional software

engineering definition belongs in Tools & Techniques, but the AI docs application belongs

here, where writers interact with models as programmable systems rather than passive

generation tools

metaprogramming vs related terms

| Term | Description | Metaprogramming Relationship |

|---|---|---|

| automation scripting | executes predefined tasks with fixed logic | metaprogramming generates or modifies the logic itself — a script that runs tasks is automation, a script that writes other scripts is metaprogramming |

| prompt engineering | instructs a model how to reason, structure output, or behave | applied metaprogramming for LLMs — instructions that shape how a model generates other content; the distinction blurs when prompts become reusable, parameterized systems |

| pseudocode | expresses logic in human-readable form, independent of any language or execution environment | the communication counterpart — describes what a program should do without being executable; metaprogramming is executable, pseudocode deliberately isn't |

Example: a tech writer authors a Claude Skill that instructs an LLM to generate consistently structured endpoint descriptions from OpenAPI spec changes on every release — the skill itself is a metaprogram: a set of instructions that produces documentation code rather than writing each entry manually

Related Terms: AI agent, CI/CD pipeline, docs-as-code, LLM, MCP server, OpenAPI Specification, orchestration, prompt engineering

Sources:

- DEV Community: "A Practical Guide to Metaprogramming in Python" by Karishma Shukla

- passo.uno: "New habits for tech writers in the age of LLMs" by Fabrizio Ferri Benedetti

- Wikipedia: "Metaprogramming"

Natural Language Processing

Definition: also known as NLP; computer's ability to analyze and generate responses that mimic human language use through machine learning on large text datasets

Purpose: powers features in documentation tools such as search capability, autocomplete, spell-check, and automated translation of API documentation

Example: NLP enables smart search in API documentation that understands queries like "how to authenticate" and returns relevant authentication endpoints

Related Terms: AI, AI agent, Large Language Model, Machine Learning

Sources:

- University of Washington: "AI + Teaching"

- UW API Docs: Module 1, Lesson 4, "Intro to AI and API docs"

orchestration

Definition: coordination of multiple AI models, tools, agents, or automated processes toward a larger goal; in documentation contexts, the design and management of systems where LLMs, APIs, and automated workflows operate together to produce, validate, or maintain content

Purpose: enables tech writers to move from executing individual tasks to architecting systems that execute on their behalf — release note pipelines, multi-step agentic workflows, and LLM-powered validation chains are all orchestration; the writer's role shifts from author to system designer and context curator

Why this belongs in AI & APIs: orchestration as a general

computing concept - process scheduling, microservices coordination -

belongs in Workflows & Methodologies; the documentation-specific

application, designing systems where models and tools collaborate

to generate, transform, and surface content — is an emergent

practice specific to LLM-era tech writing

orchestration vs related terms

| Term | Description | Distinction |

|---|---|---|

| automation scripting | executes fixed, predefined logic | orchestration coordinates dynamic, multi-step systems where outputs of one process become inputs to another — often involving models making decisions at runtime |

| metaprogramming | instructions that shape how a model or program behaves | operates at the instruction level; orchestration operates at the system level — coordinating which models, tools, and agents run, in what order, and with what context; a Claude Skill is metaprogramming, the pipeline that invokes it is orchestration |

Example: a tech writer designs an agentic CI/CD pipeline that triggers on every API release — an LLM reads the OpenAPI diff, a second agent drafts affected reference entries, a validation step runs docs-as-tests against the new endpoints, and a final agent opens a pull request with the changes; the writer authored the system, not the content

Related Terms: agent configuration, AI agent, docs-as-tests, Large Language Model, metaprogramming, MCP server, prompt engineering

Sources:

- LangChain Docs: "LangChain overview"

- passo.uno: "New habits for tech writers in the age of LLMs" by Fabrizio Ferri Benedetti

prompt engineering

Definition: practice of crafting effective instructions and queries to AI systems to generate desired outputs

Purpose: enables documentation teams to consistently collect useful results from AI tools by providing clear context, constraints, and expected output formats

Example: requesting "Generate an OpenAPI description for a

GET endpoint that retrieves user profiles, including response codes

and example JSON" rather than "describe this endpoint"

Related Terms: agent configuration, AI, AI agent, genAI, Large Language Model, metaprogramming, orchestration

Sources:

- University of Washington: "AI + Teaching"

- UW API Docs: Module 1, Lesson 4, "Intro to AI and API docs"

RAG

Definition: acronym for Retrieval-Augmented Generation; AI technique that combines information retrieval with text generation to produce accurate, contextually relevant answers to technical questions; retrieves relevant docs and/or data from a knowledge base, then uses that context to generate responses rather than relying solely on the AI model's training data

Purpose: improves accuracy and reduces hallucinations in AI-powered docs tools by grounding responses in real source material; enables AI assistants to provide up-to-date, domain-specific answers with verifiable sources

RAG vs GraphRAG: GraphRAG is an implementation approach that uses knowledge graph structures to improve retrieval quality, but the fundamental concept of grounding AI responses in source material remains the same

Example: when a developer asks "How do I authenticate?", a RAG-based system fetches relevant sections from authentication docs, API reference, and code examples, then generates a synthesized answer such as "Based on the authentication guide, you need to include your API key in the Authorization header as a Bearer token" with citations to the source docs

Related Terms: AI agent, docs-as-ecosystem, domain knowledge, Fin, Inkeep, Kapa.ai, knowledge graph, Large Language Model

Sources:

- Amazon Web Services, Inc.: "What is RAG (Retrieval-Augmented Generation)?

- GeeksforGeeks: "What is Retrieval Augmented Generation (RAG)?

- IBM: "What is GraphRAG?"

- Wikipedia: "Retrieval-augmented generation"

training data

Definition: large datasets used to teach machine learning models to recognize patterns and generate responses

Purpose: understanding training data limitations helps documentation teams recognize when AI outputs may contain biases, outdated information, or inaccuracies requiring verification

Related Terms: AI, genAI, Large Language Model, Machine Learning

Sources:

- University of Washington: "AI + Teaching"

- UW API Docs: Module 1, Lesson 4, "Intro to AI and API docs"